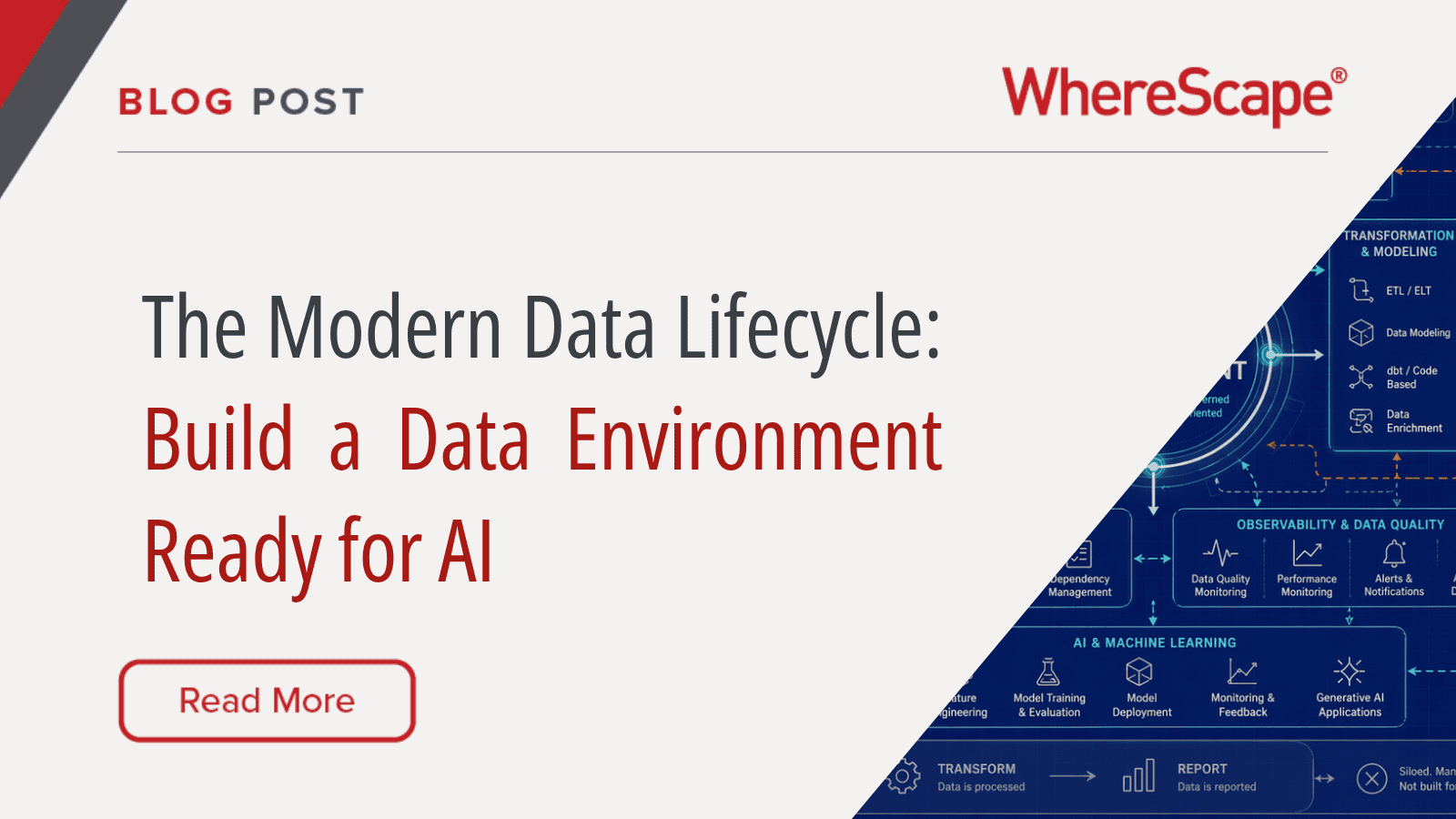

Let’s preface this blog with what many know deep down but not everyone has consciously accepted: a modern data environment is no longer just a place to store, transform and report on data.

Instead, it is now expected to support business intelligence, real-time analytics, data products, governance, compliance and AI initiatives – all at the same time. That creates a new kind of pressure for data leaders, architects, engineers and governance teams.

The challenge is simple to describe, but difficult to solve: most data environments were just not designed for this level of change.

Many were built around reporting and analytics. Some were built around a specific warehouse platform, a set of ETL scripts, a collection of stored procedures or a reporting layer that grew organically over time. They may still work, but they were often not designed to support today’s combination of AI, semantic consistency, governed access, metadata-driven delivery and rapid platform evolution.

This was the focus of a recent WhereScape and ER/Studio panel discussion, The Modern Data Lifecycle: From Design to Deployment in an AI-Driven World. The conversation brought together product leaders and data architecture specialists to explore what needs to change as organizations modernize their data platforms for AI and analytics.

The core takeaway was clear: the modern data lifecycle is not just about moving data faster. It is about creating a connected system where business meaning, architecture, modeling, engineering, governance, automation and deployment work together.

That’s why we firmly believe: a modern data environment needs a semantic backbone.

What Is the Modern Data Lifecycle?

The modern data lifecycle is the connected process of designing, building, governing, deploying, operating and evolving data assets.

In older environments, these activities were often separated. Data architects created models. Data engineers built pipelines. Governance teams documented policies. BI teams created reports. Platform teams handled deployment. Business users reviewed the final output… often after most of the work was already done.

That separation creates friction.

When design, governance, engineering and deployment operate in silos, organizations struggle to answer basic questions:

- What does this data mean?

- Where did this number come from?

- Which business process does this data support?

- Which reports depend on this field?

- What happens if this source system changes?

- Can this data be trusted for AI?

- Can this model move to another platform later?

A modern data lifecycle brings those questions into the process much earlier.

Instead of treating governance and documentation as after-the-fact tasks, they become part of the build. Instead of assuming engineers can infer business meaning from source tables, business context is captured in models, metadata, glossaries and semantic definitions. Instead of rebuilding pipelines manually for every change, automation turns governed metadata into deployable data infrastructure.

That is the shift.

Why Traditional Data Development Struggles in Modern Environments

Traditional data development workflows often start with the source system. Makes sense, right?

It looks like this: the team identifies a table, extracts data, transforms it and delivers it to a warehouse, lakehouse, report, or dashboard.

Okay, great, that approach can work well for simple reporting use cases. But it becomes fragile when the data environment has to support multiple domains, changing requirements, AI workloads, data products and governed self-service access.

The problem is not that teams are moving too slowly. Often, they are moving fast… but without enough shared meaning.

As one of the panelists put it, moving data from point A to point B does not automatically create value. Value comes from making data understandable, trusted, reusable and ultimately being aligned with the business.

A modern data environment needs more than pipelines. It needs:

- Shared definitions of core business concepts.

- Clear relationships between business entities.

- Traceability from source to target.

- Versioned metadata and change history.

- Repeatable patterns for building and deploying data assets.

- Governance that travels with the data.

- Flexibility to adapt when platforms or requirements change.

Without this foundation, faster delivery can result in a data swamp… just one that’s created faster than ever!

The Semantic Backbone: Why Meaning Matters More Than Ever

A semantic backbone is the shared layer of business meaning that connects data architecture, governance, analytics and AI.

This does not have to mean one enormous enterprise model that takes years to complete. In fact, the panel strongly argued against a “big bang” approach. Instead, organizations can start with a specific business domain, data product, or high-value area, then expand over time.

The important point is that the organization needs a way to define and reuse business meaning.

For example, a company may need consistent definitions for:

- Customer.

- Product.

- Claim.

- Provider.

- Policy.

- Order.

- Student.

- Supplier.

- Transaction.

Those definitions should not live only in someone’s head, a static spreadsheet or a buried wiki page. They should be connected to models, source systems, data products, governance policies and downstream analytics.

This is where data modeling becomes more than a technical design exercise. Tools such as ER/Studio help organizations create logical and physical data models, connect technical assets to business meaning and integrate data architecture with governance platforms such as Microsoft Purview and Collibra. ER/Studio provides a way to connect data modeling, metadata and governance across complex enterprise environments.

WhereScape then focuses on turning that metadata and design intent into automated data infrastructure, including warehouses, vaults, lakes, marts, pipelines, documentation, lineage, and deployment workflows through products such as WhereScape 3D and WhereScape RED.

Together, the idea is not simply to model data. It is to create a flow from business meaning to deployable data systems.

Semantic Backbone vs. Semantic Layer

The phrase “semantic layer” is used in many different ways, so it is worth separating two related ideas:

- A semantic backbone is the broader foundation of business meaning. It helps define entities, relationships, rules, terminology, and context across the data lifecycle.

- A semantic layer is usually closer to consumption. It helps BI tools, analytics users, and AI systems query data using business-friendly concepts, metrics, and definitions. Microsoft describes Power BI semantic models in Fabric as logical descriptions of analytical domains, including metrics, business-friendly terminology, and structures for deeper analysis.

Both matter.

The risk is semantic drift. If governance tools, data models, BI semantic layers, and AI systems all define the same concept differently, the organization ends up with multiple versions of meaning. That creates confusion for people and risk for AI systems.

For example, “customer” might mean any of the following:

- A person who bought a product.

- A household with multiple accounts.

- An active account holder.

- A former buyer that’s still present in a CRM.

- A legal entity tied to a contract.

- A marketing contact who has never purchased.

If those definitions are not governed and connected, different reports and AI tools may return different answers to the same question.

The goal is not to force every team into one rigid model. The goal is to create enough shared meaning that data products, reports, models and AI systems can be trusted.

Why AI Raises the Stakes for the Data Environment

AI changes the urgency of data architecture… but it does not remove the fundamentals.

An AI system still needs trustworthy, well-described, governed data. In fact, AI makes weak foundations more visible because it allows more people to ask more questions more quickly.

If a user asks a natural-language question of a data environment, the AI needs to understand both the question and the data available to answer it. If the system does not understand what a field means, which table is authoritative, or how business concepts relate, it may produce a confident but incorrect answer.

This is why our panel spent so much time discussing semantics, metadata, and modeling. AI does not eliminate the need for data architecture. It increases the value of good architecture.

A strong AI-ready data environment needs:

- Clear definitions so AI systems understand business terminology.

- Lineage so answers can be traced back to sources.

- Documentation so humans can validate how outputs were produced.

- Governance so sensitive data is protected.

- Data quality checks so bad inputs are caught early.

- Repeatable deployment so changes are controlled and auditable.

- Metadata that can be reused by people, tools and machines.

This is also why data governance and data lineage are becoming central to AI readiness. Microsoft Purview, for example, supports lineage across raw data, transformed data, prepared data, and visualization layers, helping organizations understand how data moves through their estate.

For AI, that kind of traceability is not optional. It is how teams build confidence in the answers AI produces.

The Blueprint Analogy: Do Not Build the Skyscraper First

One of the strongest analogies from the panel was the idea of a blueprint.

No organization would build a skyscraper without a blueprint: that’s crazy, right? Yet many organizations build expensive, business-critical data platforms without a clear architectural model of what they are building.

The result is all too familiar, resulting in:

- Data pipelines grow faster than documentation.

- Reports depend on undocumented logic.

- Business rules are buried in code.

- Source systems change without clear impact analysis.

- Data teams become dependent on tribal knowledge.

- AI initiatives are launched before the data is ready.

A data environment needs a blueprint for the same reason a building does. It provides shared understanding, before expensive construction begins.

But the blueprint should not become a bottleneck. The goal is not to spend years modeling the entire enterprise before delivering value. The goal is to start with a practical scope, such as a domain, data product, source system, or analytics use case, then create a repeatable approach.

Start with one valuable slice. Prove the pattern. Reuse the pattern.

Data Products Need Shared Meaning

Data product thinking is one of the major changes shaping modern data programs.

Instead of treating data as a byproduct of applications, organizations increasingly treat it as an asset that should be designed, owned, documented, governed, and consumed. That is a useful shift, but it can create new problems if every domain defines its own products in isolation.

A data product should not just be a table or pipeline with a nicer name. It should have:

- A clear business purpose.

- A defined owner.

- Documented inputs and outputs.

- Known quality expectations.

- Governance policies.

- Usage context.

- Lineage.

- Versioning.

- A connection to shared business definitions.

This is where the semantic backbone becomes important again. If each team creates data products using different definitions, self-service analytics becomes inconsistent. The organization may move faster locally, but become less aligned globally.

A modern data environment needs both domain autonomy and semantic consistency.

Metadata Is the Thread Through the Lifecycle

Metadata is often described as “data about data,” but in the modern data lifecycle it is much more than that.

Metadata is like connective tissue.

It connects business concepts to source systems. It connects logical models to physical structures. It connects governance policies to pipelines. It connects data products to downstream reports. It connects current architecture to future migration options.

In a metadata-driven lifecycle, teams can use metadata to:

- Discover and profile source systems.

- Define models and mappings.

- Generate physical structures.

- Generate ELT or ETL code.

- Apply naming and design standards.

- Automate documentation.

- Produce lineage and impact analysis.

- Deploy changes across environments.

- Rebuild assets for new platforms.

This is the very heart of data warehouse automation. WhereScape describes its automation as a way to reduce manual coding, speed development and launch data warehouse projects faster using generated code and repeatable delivery patterns.

The more metadata is captured early, the more the organization can automate later.

Automation Should Not Mean Losing Control

Automation is sometimes misunderstood as a black box. But that’s not the point.

The goal of automation in the modern data lifecycle is not to hide architecture from the data team. It is to make repeatable work faster, more consistent and more governed.

In practice, this means teams still define the model, patterns, business rules, standards and target architecture. Automation then helps generate the repetitive artifacts required to implement that design.

Those artifacts may include:

- Tables.

- Views.

- Load processes.

- Transformation logic.

- Jobs.

- Schedules.

- Documentation.

- Deployment packages.

- Lineage.

- Impact analysis.

This matters because a large amount of data engineering work is repetitive. Creating structures, loading data, applying standard patterns, scheduling jobs, and maintaining documentation all consume time. When that work is automated from governed metadata, teams can focus more energy on understanding business requirements, designing the right models, validating data quality, and delivering useful outcomes.

In our panel, our Head of Product, Simon Spring made a practical point: the faster a team can complete a thin end-to-end thread, from model to generated output, the faster it can learn what metadata it needs to capture.

That is an important principle.

Do not wait until every model is perfect. Build a thin slice. Generate the output. Validate it. Improve the model. Rinse and repeat.

The Role of Data Modeling in the AI-Driven Lifecycle

Data modeling has sometimes been treated as old-fashioned, especially in environments focused on code-first pipelines or rapid ingestion. But AI is bringing data modeling back into the center of the conversation.

The reason is simple: AI needs meaning.

A data model captures meaning in ways that raw source structures often do not. It shows what business entities exist, how they relate, which attributes describe them and how they support business processes.

This becomes especially important when:

- Data comes from many operational systems.

- Source systems use inconsistent terminology.

- The organization is building data products.

- AI tools need to reason across datasets.

- Governance teams need traceable definitions.

- Business users need trusted self-service analytics.

- Teams need to modernize without rebuilding everything.

A good model does not slow down the lifecycle. It reduces rework by helping teams build the right thing earlier.

That is one of the reasons WhereScape places so much emphasis on design automation through WhereScape 3D. 3D is built around discovery, profiling, modeling, metadata, and design workflows that help create a blueprint for scalable data systems.

When that design can be pushed into build automation through WhereScape RED, the model does not remain a static diagram. It becomes part of the delivery lifecycle.

Platform Change Is Inevitable

Another theme from our online panel was platform change.

Even within a single vendor ecosystem, organizations may move from SQL Server to Azure SQL, Synapse, Microsoft Fabric, or other architectures over time. Outside Microsoft, they may move between Snowflake, Databricks, Redshift, Teradata, Oracle, or other platforms.

That creates a strategic question: how do you actually design a data environment that can evolve… without starting over?

The answer is not to avoid platform-specific optimization. The answer is to avoid burying all business meaning and architectural intent inside platform-specific code.

When the model, metadata, mappings and patterns exist outside the target platform, organizations have more freedom to adapt. The business model can remain stable even if the physical implementation changes.

This is where metadata-driven automation helps reduce platform lock-in. If an organization has captured its design properly, it can regenerate or refactor implementation artifacts for different targets more easily than if everything was hand-coded directly into one environment.

Our broad amount of supported platforms reflects this need for flexibility across current and future data environments. The strategic point is larger than any one platform: architecture should preserve options.

Governance Must Move Into the Build Process

Governance cannot remain a separate documentation exercise.

In many organizations, governance is still treated as something that happens after delivery. The team builds pipelines, deploys reports, and then someone tries to document what happened. That approach cannot keep up with modern data environments.

A modern lifecycle needs governance by design.

That means governance requirements should be connected to the design and development process from the start. Documentation, lineage, impact analysis, standards, and auditability should be generated or updated as the data environment changes.

This does not remove the need for governance teams. It gives them better tools and more reliable metadata.

In a governed lifecycle, teams can answer questions such as:

- Which source fields feed this report?

- Which business rule changed this metric?

- Which users or teams depend on this data product?

- Which pipelines use sensitive data?

- Which downstream assets are affected by this change?

- Which deployment introduced this object?

- Which version of the model is currently live?

Our own data governance and lineage capabilities are designed around this concept, generating documentation, lineage, impact analysis, and audit trails as teams design and build.

For AI-driven organizations, this is critical. Governance must be part of the pipeline… not an after-thought PDF created after the project has already ended.

The Modern Lifecycle in Practice

A practical modern data lifecycle might look like this:

- Define the business domain.

Start with a bounded area, such as customer, product, claims, policy, order, finance or student data. - Capture business meaning.

Define entities, relationships, terminology and rules with the business and governance teams. - Discover and profile source systems.

Understand where the data exists, how it is structured, what quality issues exist and how source data maps to the business model. - Design the target architecture.

Decide whether the use case needs a warehouse, lakehouse, Data Vault, dimensional model, medallion architecture, data mart or hybrid pattern. - Generate and deploy.

Use metadata-driven automation to create structures, code, jobs, documentation, lineage and deployment workflows. - Validate with users.

Put real data in front of the people who understand the business process and confirm whether the output is meaningful. - Iterate quickly.

Update the model, mappings, rules, or patterns, then regenerate the affected components. - Govern continuously.

Maintain documentation, lineage, audit history and impact analysis as part of the lifecycle. - Prepare for AI consumption.

Expose trusted, governed, well-described data to semantic layers, BI tools, data products and AI systems.

This is not a waterfall process. It is an iterative lifecycle with stronger foundations.

Where AI Can Help, and Where It Can Create Risk

AI can help data teams move faster, but it should not be trusted blindly.

The panel discussed several areas where AI can support the lifecycle, including metadata enrichment, field descriptions, business key suggestions, model assistance, and workflow automation. These capabilities can reduce manual effort, especially when teams are working with large or poorly documented environments.

But AI can also introduce design errors.

For example, an AI system might incorrectly identify a composite business key, misunderstand a relationship, or infer a join that creates duplicated records. In a cloud environment, that kind of mistake can create both quality problems and cost problems.

The right pattern is human-guided AI.

Use AI to assist discovery, documentation, and modeling. But validate the output through experienced data architects, business experts, profiling, test data, and governed deployment workflows.

AI should accelerate good data practice, not replace it.

What Data Leaders Should Prioritize Now

If your organization is modernizing its data environment for AI and analytics, the most important priority is not a single tool or platform. It is the ability to connect business meaning to delivery.

Start with these priorities:

- Define the core business concepts that matter most.

- Connect data modeling with governance and engineering.

- Capture metadata as early as possible.

- Build repeatable patterns for data delivery.

- Automate repetitive development and documentation tasks.

- Create lineage and impact analysis by default.

- Deliver thin end-to-end slices quickly.

- Validate outputs with business users before scaling.

- Keep platform options open where possible.

- Treat AI readiness as a data lifecycle challenge, not just a model deployment challenge.

A modern data environment is not defined by where it runs. It is defined by how well it can adapt.

The Future Data Environment Is Model-Driven, Governed & Automated

The modern data lifecycle is not about choosing between speed and control.

It is about designing a data environment where speed and control support each other. Good models reduce rework. Metadata enables automation. Automation improves consistency. Governance builds trust. Trust makes AI and analytics more useful.

The organizations that succeed will not be the ones that move the most data the fastest. They will be the ones that understand their data, define it clearly, govern it continuously, and automate the path from design to deployment.

That is how a data environment becomes ready for AI.

It starts with meaning. It scales through metadata. It evolves through automation.

FAQ

A modern data lifecycle is the connected process of designing, building, governing, deploying, operating and evolving data assets. It links data modeling, metadata, governance, automation, deployment and analytics into one continuous system.

A data environment is the full ecosystem of data sources, platforms, models, pipelines, governance processes, documentation, tools and users that support analytics, reporting, operations + AI.

AI needs trusted data, clear definitions, lineage, governance and context. Without those foundations, AI systems may produce incorrect or misleading answers from poorly understood data.

A semantic backbone is the shared layer of business meaning that connects data models, governance, analytics, data products and AI. It helps ensure that core concepts such as customer, product, order or claim are defined consistently.

A semantic backbone is a broader architectural foundation for business meaning. A semantic layer usually sits closer to analytics and BI consumption, helping users query data through business-friendly metrics and terms.

Metadata connects business definitions, source systems, models, transformations, lineage, governance and deployment. When metadata is captured early, teams can automate more of the lifecycle and reduce manual rework.

Automation turns repeatable metadata and design patterns into deployable structures, code, jobs, documentation and lineage. It helps teams deliver faster while improving consistency and governance.

ER/Studio helps organizations model and govern data architecture. WhereScape helps turn metadata and models into automated data infrastructure, including design, development, deployment, documentation and lineage.